If you want to restart your App Service on a scheduled basis, you can do that using simple PowerShell:

Stop-AzureRmWebApp -Name '_App Service Name_' -ResourceGroupName '_Resource Group Name_' Start-AzureRmWebApp -Name '_App Service Name_' -ResourceGroupName '_Resource Group Name_'

Basically you have to solve two issues:

- How to schedule such Powershell script to run automatically at given times? We can use a simple WebJob for that.

- How to authenticate the execution? We should use a Service Principal Id for that.

Let’s start from the second one.

Credits: This is an updated and fixed version of a procedure originally published by Karan Singh – a Microsoft Employee on his MSDN blog.

Getting a Service Principal Id for authentication

It is not a good idea to use a real user-account for authentication of such a job. If you have an Organizational Account with 2-Factor Authentication, forget it.

The right way of authenticating your jobs is to use a Service Principal Id which allows you to proceed with silent authentication.

To create one, save and run following Powershell script from your PC (one-off task):

param

(

[Parameter(Mandatory=$true, HelpMessage="Enter Azure Subscription name. You need to be Subscription Admin to execute the script")]

[string] $subscriptionName,

[Parameter(Mandatory=$true, HelpMessage="Provide a password for SPN application that you would create")]

[string] $password,

[Parameter(Mandatory=$false, HelpMessage="Provide a SPN role assignment")]

[string] $spnRole = "owner"

)

#Initialize

$ErrorActionPreference = "Stop"

$VerbosePreference = "SilentlyContinue"

$userName = $env:USERNAME

$newguid = [guid]::NewGuid()

$displayName = [String]::Format("VSO.{0}.{1}", $userName, $newguid)

$homePage = "http://" + $displayName

$identifierUri = $homePage

#Initialize subscription

$isAzureModulePresent = Get-Module -Name AzureRM* -ListAvailable

if ([String]::IsNullOrEmpty($isAzureModulePresent) -eq $true)

{

Write-Output "Script requires AzureRM modules to be present. Obtain AzureRM from https://github.com/Azure/azure-powershell/releases. Please refer https://github.com/Microsoft/vsts-tasks/blob/master/Tasks/DeployAzureResourceGroup/README.md for recommended AzureRM versions." -Verbose

return

}

Import-Module -Name AzureRM.Profile

Write-Output "Provide your credentials to access Azure subscription $subscriptionName" -Verbose

Login-AzureRmAccount -SubscriptionName $subscriptionName

$azureSubscription = Get-AzureRmSubscription -SubscriptionName $subscriptionName

$connectionName = $azureSubscription.SubscriptionName

$tenantId = $azureSubscription.TenantId

$id = $azureSubscription.SubscriptionId

#Create a new AD Application

Write-Output "Creating a new Application in AAD (App URI - $identifierUri)" -Verbose

$secpasswd = ConvertTo-SecureString $password -AsPlainText -Force

$azureAdApplication = New-AzureRmADApplication -DisplayName $displayName -HomePage $homePage -IdentifierUris $identifierUri -Password $secpasswd -Verbose

$appId = $azureAdApplication.ApplicationId

Write-Output "Azure AAD Application creation completed successfully (Application Id: $appId)" -Verbose

#Create new SPN

Write-Output "Creating a new SPN" -Verbose

$spn = New-AzureRmADServicePrincipal -ApplicationId $appId

$spnName = $spn.ServicePrincipalName

Write-Output "SPN creation completed successfully (SPN Name: $spnName)" -Verbose

#Assign role to SPN

Write-Output "Waiting for SPN creation to reflect in Directory before Role assignment"

Start-Sleep 20

Write-Output "Assigning role ($spnRole) to SPN App ($appId)" -Verbose

New-AzureRmRoleAssignment -RoleDefinitionName $spnRole -ServicePrincipalName $appId

Write-Output "SPN role assignment completed successfully" -Verbose

#Print the values

Write-Output "`nCopy and Paste below values for Service Connection" -Verbose

Write-Output "***************************************************************************"

Write-Output "Connection Name: $connectionName(SPN)"

Write-Output "Subscription Id: $id"

Write-Output "Subscription Name: $connectionName"

Write-Output "Service Principal Id: $appId"

Write-Output "Service Principal key: <Password that you typed in>"

Write-Output "Tenant Id: $tenantId"

Write-Output "***************************************************************************"

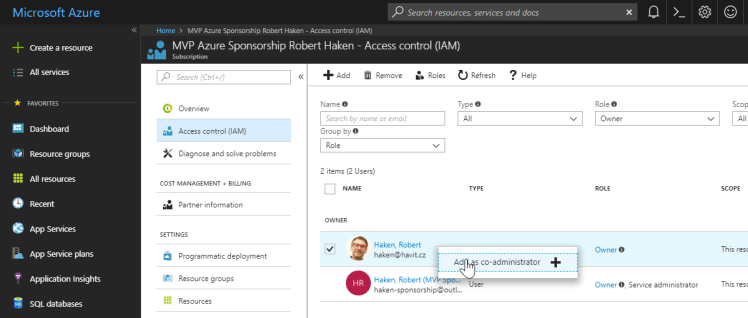

You will be asked for a Subscription Name and Password for the Service Principal Id. You will also need to be an admin on your Azure Active Directory to be able to proceed.

Save the results securely, you can use the created Service Principal Id which gets the Owner role (or any other you specify) for many other administrative tasks (although it is a good idea to create a separate Service Principal for every single task).

Scheduling the restart using PowerShell WebJob

Use any WebJob deployment procedure of your taste to create a scheduled Powershell WebJob executing following script:

$ProgressPreference= "SilentlyContinue"

$password = '_Service Principal Key/Password_'

$secpasswd = ConvertTo-SecureString $password -AsPlainText -Force

$mycreds = New-Object System.Management.Automation.PSCredential ("_Service Principal Id_", $secpasswd)

Add-AzureRmAccount -ServicePrincipal -Tenant '_Tenant Id_' -Credential $mycreds

Select-AzureRmSubscription -SubscriptionId '_Subscription Id_'

Stop-AzureRmWebApp -Name '_App Service Name_' -ResourceGroupName '_Resource Group Name_'

Start-AzureRmWebApp -Name '_App Service Name_' -ResourceGroupName '_Resource Group Name_'

For manual deployment, you can use the Azure Portal directly:

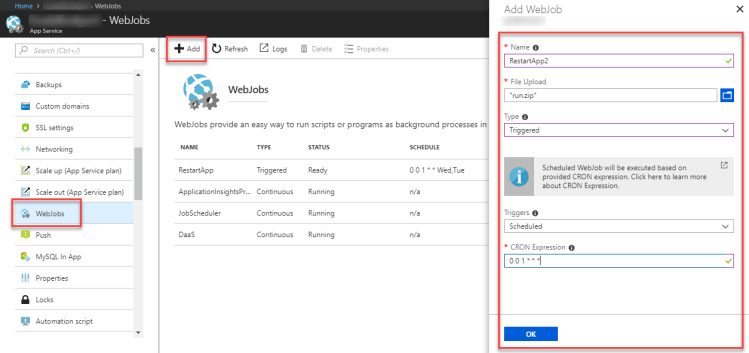

- Save the script as run.ps1 file and create a ZIP archive with it (with any name).

- Go to App Service / Web Jobs and Add a new WebJob there:

And it’s done. Just be sure to enable Always On for your App Service to execute the WebJobs on schedule.

You can Start the job manually from here (the Start button) if you want to test it and you can verify the execution results using KUDU Dashboard (the Logs button).